FetchBPF: Customizable Prefetching Policies in Linux with eBPF

1. Introduction

FetchBPF is an eBPF-based framework that lets you plug custom page-fault–driven prefetching policies into the Linux kernel—using safe hooks and helpers—so they behave like native kernel prefetchers without needing to modify the kernel itself.

There are existing problems in the research done before them.

- First, a one-size-fits-all prefetcher that provides optimal performance for all applications does not exist. A new prefetching policy might excel in one memory access pattern but not another. For example, Leap outperforms the built-in Linux prefetcher – which is optimized for sequential access patterns – when an application exhibits a large strided pattern; however, it lags behind Canvas for workloads that involve chasing a large number of pointers.

- Naïve idea: ship many kernel prefetchers and choose among them. However, it’s unclear if you can cover all patterns with any finite set of policies, and implementing and maintaining new kernel policies is extremely expensive engineering-wise

- Another idea: do prefetching from user space, but this causes huge overhead due to constant context switching, and prefetching needs to be done as quick as possible.

- Introducing: FetchBPF, loading new prefetching policies in the kernel with negligible performance overhead using eBPF. FetchBPF modifies kernel by adding new eBPF hooks to implement custom prefetching policies and additional eBPF helper functions to evoke necessary kernel mechanisms.

Contributions

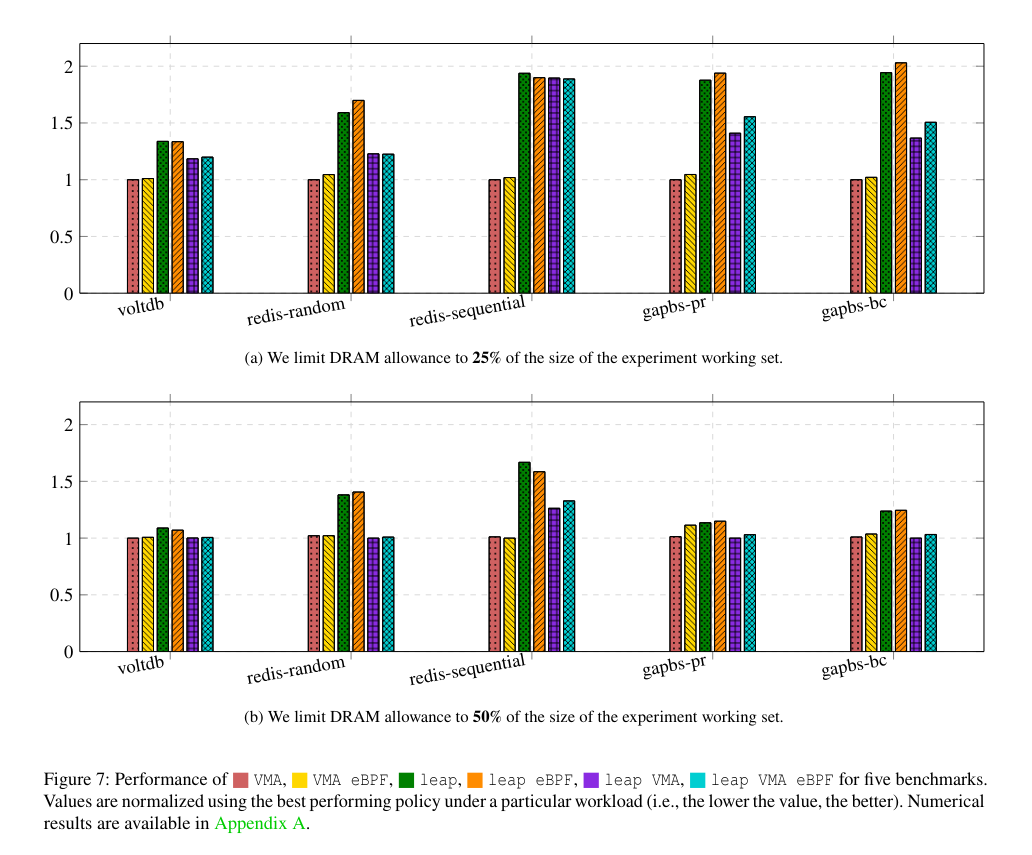

- They empirically show that applications with different memory access patterns benefit from different policies.

- They propose an extension to the Linux eBPF framework to implement prefetching policies.

- They implement and evaluate a number of policies from the literature to showcase FetchBPF.

2. Background & Motivation

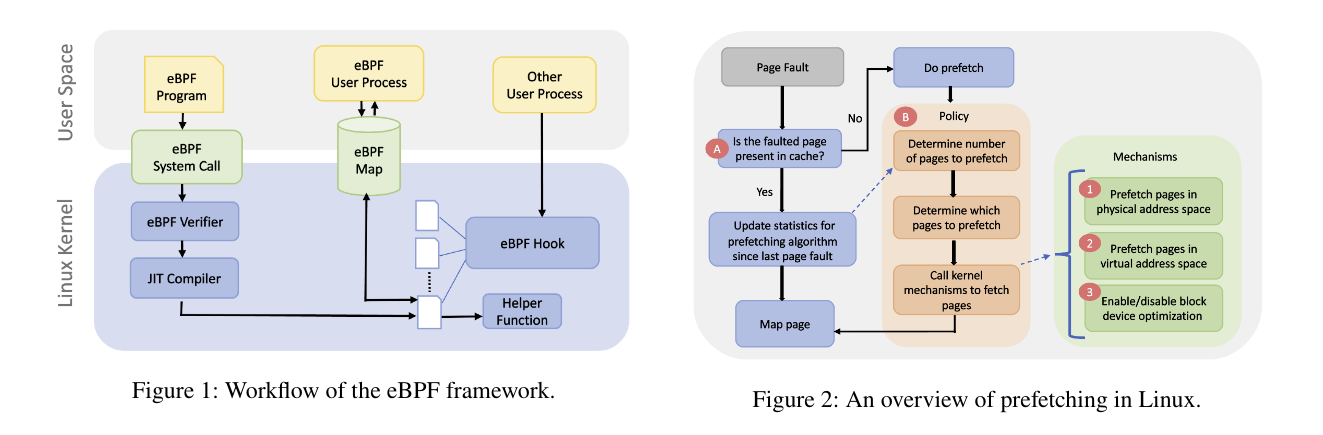

The extended Berkeley Packet Filter (eBPF) is a Linux framework that allows users to extend the kernel without any modification to the kernel’s source code. eBPF programs are event-driven, invoked when the hooks they are attached to are triggered. eBPF supports a variety of kernel hooks, e.g., system calls, tracepoints, and kernel probes.

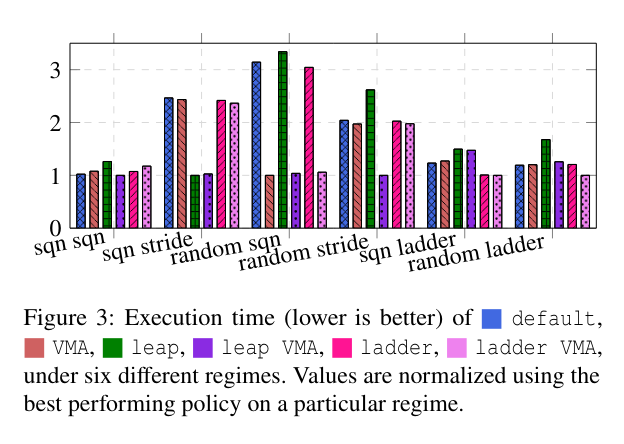

When a page fault is detected, the kernel first checks if the page resides in the page cache. A minor fault occurs when the page is present in the cache but not yet mapped. The kernel simply updates the page-fault statistics and maps the page. If the page is not found in the cache, then a remote request is issued to bring the page to memory. As remote accesses are expensive (e.g., when reading from the disk), in addition to loading the requested page, the kernel prefetches pages that it predicts will be used in the near future.

The figure 3 shows the execution time of six prefetching policies for six regimes. We observe that no policy outperforms the others in all regimes. Clearly, deploying policies that best fit specific workloads can significantly improve performance.

3. Design & Implementation

Kernel is modified to include two additional hook points where prefetching-related eBPF programs can be triggered:

A) when a page fault occurs and B) when the kernel decides on the specific pages to prefetch.

They also include additional eBPF helper functions which help developers evoke kernel mechanisms related to page prefetching.

Design Process

(A) Existing prefetching policies typically require as input a history of memory accesses, so FetchBPF should allow developers to customize the capture and computation of them through an eBPF program.

(B) Prefetchers run before a faulting page is requested; prefetching requests are then issued alongside with the page. FetchBPF should enable the prefetcher to call an eBPF custom prefetching logic.

eBPF Hooks

(A) prefetch_stats: FetchBPF triggers an eBPF program attached to this hook every time a page fault occurs, including both major and minor faults. Policies such as Leap use the history of faulting addresses to gain insight. Parameters passed to it include information about the physical and virtual address of the faulting page to support policies based on these addresses.

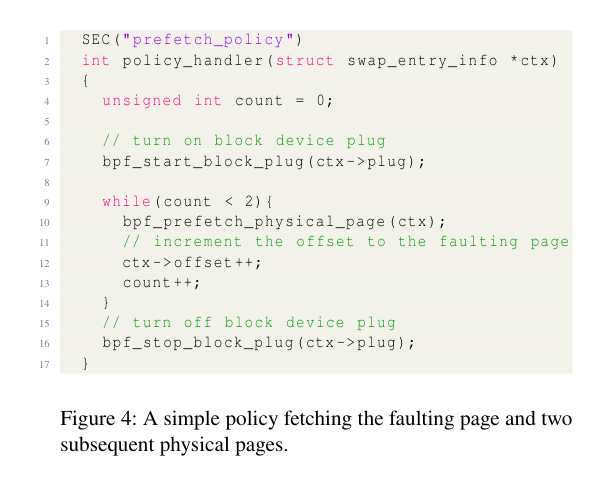

(B) prefetch_policy: FetchBPF triggers an eBPF program attached to this hook before the execution of the default Linux prefetch policy. PA and VA of faulting page are passed to this as well. The eBPF programs attached to this hook are expected to (1) identify pages of interest and (2) request the kernel to prefetch them through helper functions. They must return either an error code, or one of the two values: PREFETCH_RA_DEFAULT_PREFETCH or PREFETCH_RA_SKIP. The former tells to run the default policy afterwards, while the latter tells to skip it.

Helper Functions

eBPF programs are limited to their program parameters and kernel-provided helper functions. FetchBPF implements the following helper functions:

(1) bpf_prefetch_physical_page: This helper function triggers the mechanism to prefetch pages based on physical addresses. It takes as input the parameters given to the eBPF program. Specifically, the information about physical swap entry offsets is used to perform page I/O.

(2) bpf_prefetch_virtual_page: This helper function triggers the mechanism to prefetch pages based on virtual addresses. It takes the same input as the previous helper function; however, the function prefetches pages based on pte (page table entries) and pte offsets.

(3) bpf_<start/stop>_block_plug: These two helper functions enable a policy to control prefetching request batching. All requests made after bpf_start_block_plug are batched. When bpf_stop_block_plug is called, batched requests are processed (e.g., sorted and merged) to optimize I/O.

4. Policy Example

5. Evaluation

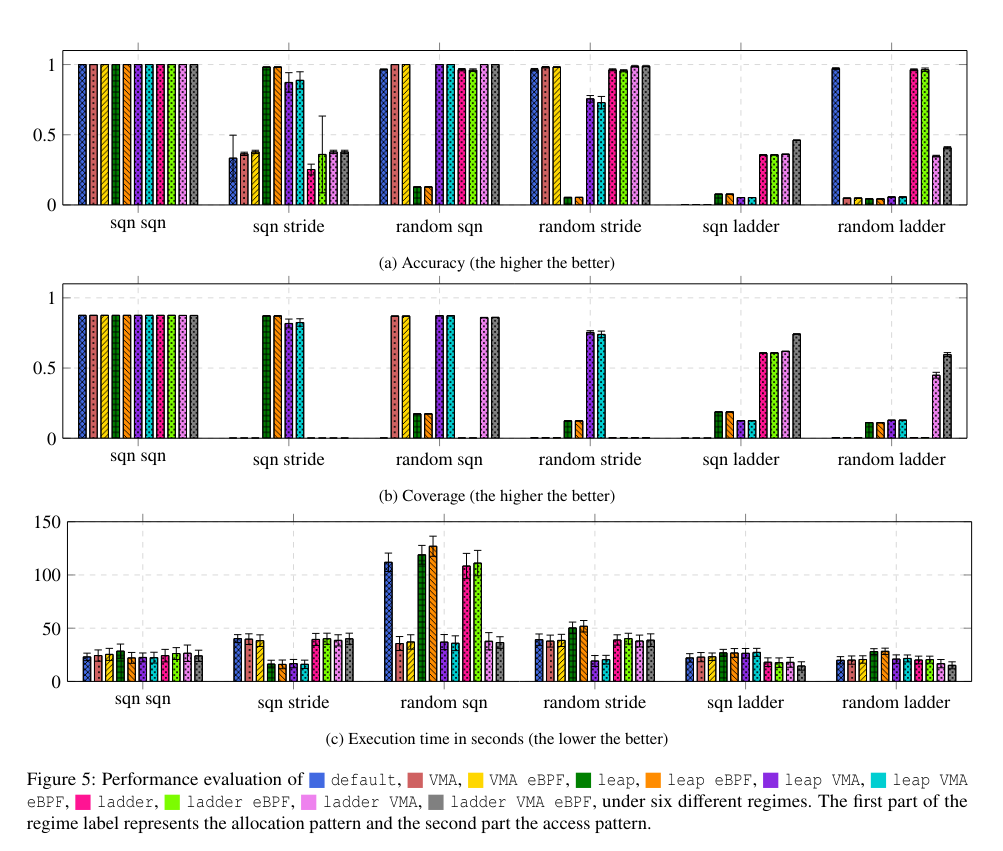

Microbenchmarks (5 GB array allocation/access)

Accuracy = cache hits / # of prefetched pages

Coverage = cache hits / (cache hits + cache misses)

Allocation Patterns: (1) Sequential allocation (sqn), where we allocate array elements (pages) in order in physical memory, and (2) random allocation (random), where we allocate elements randomly.

Access Patterns: (1) Sequential (sqn), where we access array elements in a sequential order, (2) stride (stride), where we access array elements on every third virtual page, (3) ladder (ladder), where we access array elements with an increasing stride with every access.

We care about allocation patterns because a prefetcher’s behavior depends not only on how you access pages (access pattern), but also on how those pages are laid out in physical/swap space (allocation pattern).