PageFlex: Flexible and Efficient User-space Delegation of Linux Paging Policies with eBPF

Design Goals

- Compatibility: Solution must coexist with current kernel-based policies and swap backends; i.e., some workloads running with the default policies and others using PageFlex but sharing the same swap backend.

- Fail-safety: Failure of any PageFlex components (e.g., due to policy bugs) should not affect other workloads or even crash the apps whose policy is handled.

- Minimal overheads: Any overheads introduced by the PageFlex infrastructure must be minimal. More importantly, overheads that directly impact the application performance must be negligible.

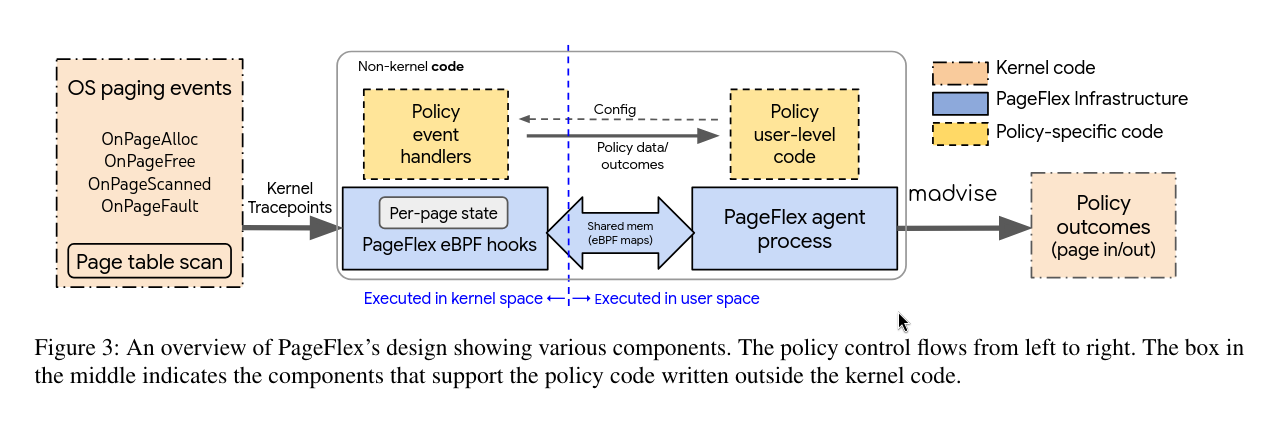

To achieve these goals, PageFlex only externalizes non-critical policy decisions for prefetching and proactive reclamation to user space, while the kernel continues to provide core mechanisms for page access tracking, on-demand page fault handling and page reclamation. PageFlex uses eBPF programs to provide a flexible, low-overhead view of in-kernel memory state to the externalized policy code. More specifically, policies run their performance-critical code inline with the kernel paths using eBPF to flexibly process the page events as they occur, without incurring the overheads of exporting large volumes of page event metadata to user space.

System Overview

At high level, PageFlex consists of a kernel component (left half of the figure) with eBPF programs where policies run in-band with the kernel events to get a low-overhead memory view and arrive at paging decisions. This information is then shared out-of-band with a user-level component (right half) which is used to enforce these decisions and implement any non-performance critical policy infrastructure.

In-kernel policy execution

PageFlex tracks various basic paging events with explicit kernel tracepoints. Policies then subscribe to (any subset of) these events and provide event handlers that PageFlex runs synchronously with the paging events in the kernel as eBPF programs.

Kernel paging events

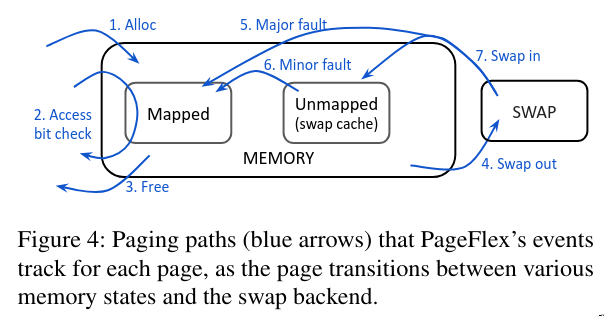

There are four paging events:

OnPageAlloc– arrows 1 and 7OnPageFree– arrows 3 and 4OnPageFault– arrows 5 and 6- reports swap-cache hit/miss

- page fault that occurs due to demand paging is not considered

OnPageScanned– arrow 2- PageFlex periodically collects page access bits, and during that scan it raises

OnPageScannedfor each page it scans.

- PageFlex periodically collects page access bits, and during that scan it raises

eBPF-based custom event handlers

Policies subscribe to (any subset of) the above events and provide custom handler functions that PageFlex runs inline with the events as eBPF programs. The event handlers run synchronously with paging paths, but the performance degrade is not high at all since the overhead of eBPF programs is very little (<50 ns per eBPF invocation), allowing PageFlex to use them aggressively even in high-frequency paths.

Low-overhead per-page state

Most new policies in literature, including learning policies like LRB, generally need to maintain per-page metadata. So PageFlex implements additional 4 B state in page struct in kernel, which introduces 0.1% additional memory usage but they claim it’s worth it. This 4B can be used by partitioning it to multiple parts to be used for different purposes.

User-level policy control and enforcement

PageFlex employs a dedicated user-level agent process to initialize and run policies for the applications that opt in for PageFlex. The agent process loads the policy’s eBPF handlers and lets policies configure them through eBPF maps. Policies can push events (e.g., page in/out decisions) to user space and handle them asynchronously with user-level handlers. Additionally, policies can perform any non-critical and complex functionality in their user-level code using the information passed from the event handlers, e.g., control loops used in page reclamation. PageFlex enables two-way shared-memory communication between user space and the eBPF event handlers with eBPF maps. Those maps can be used to share page-weight distribution to agent process.

Enforcing policy outcomes

PageFlex acts on the policy outcomes using madvise hints from the user-level agent. The agent process uses the process_madvise() wrapper to issue madvise hints against a different address space. Using system calls introduces overhead but PageFlex issues madvice calls on batches of pages.

Policy isolation

PageFlex provides separate “enclaves” for running different policies simultaneously for different groups of processes. Each enclave binds to a single kernel cgroup and applies a single policy to all the processes in that cgroup. The enclave separation is provided in event handlers (in eBPF), user-space code and in the communication between the two. If PageFlex fails, the cgroup bindings are gracefully released without any effect on the attached processes.

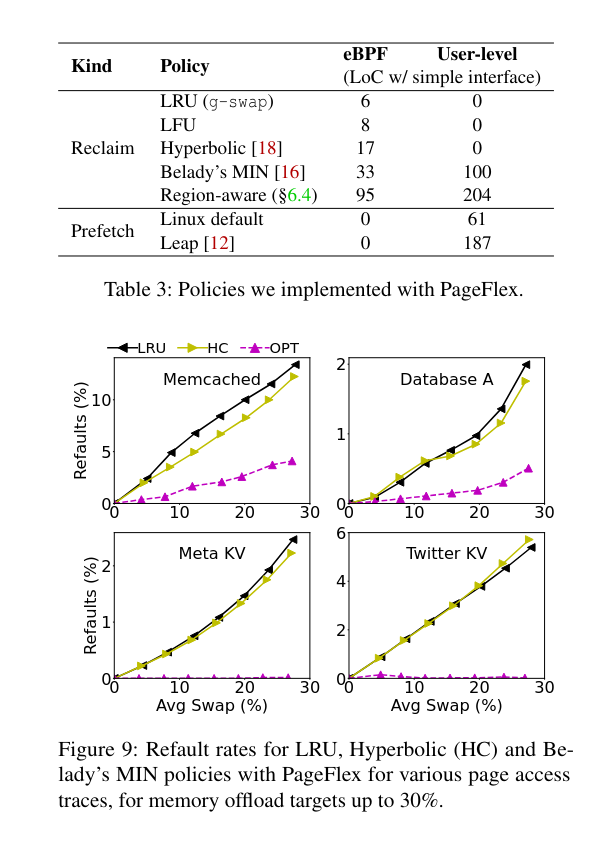

Policies in PageFlex

Unlocking policy customization

Proactive reclamation

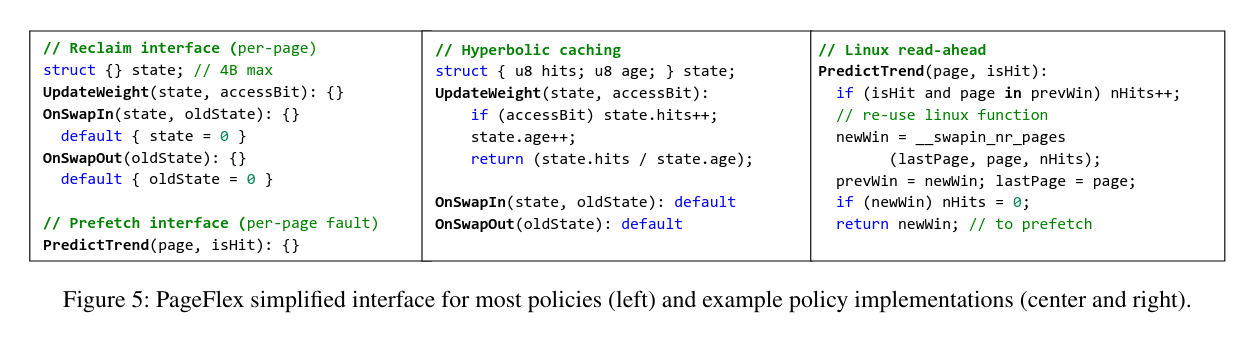

PageFlex employs periodic page-table scans to collect page access bits and allow page weight updates, exports page-weight distributions using eBPF maps, and runs the control loop in the user-level agent to determine the eviction threshold. To simplify policies, PageFlex abstracts away this common infrastructure and provides the interface shown in Figure 5 for most polices (like above) that can work with simple update functions.

By default, the state is reset once the page is swapped out. This works for most policies as they do not need the access history after a page gets reclaimed, but if they do, then the oldState can be retrieved as shown in the figure.

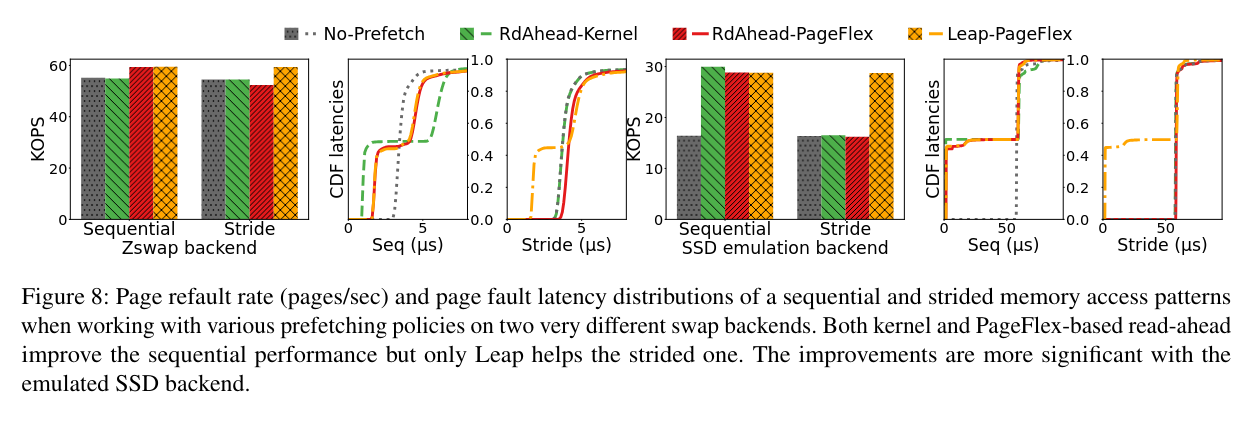

Prefetching

Prefetching policies require page refault history for trend detection and predicting the next pages in the trend to prefetch, and the number of prefetching hits (through minor-faults) to adjust the predictions. PageFlex can provide both the signals with OnPageFault events. Prefetching policies define the PredictTrend function. The function could be run directly in eBPF event handler or in user-level code depending on complexity.

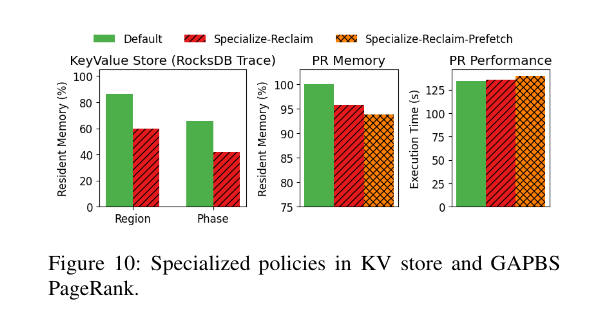

Application-specific policies

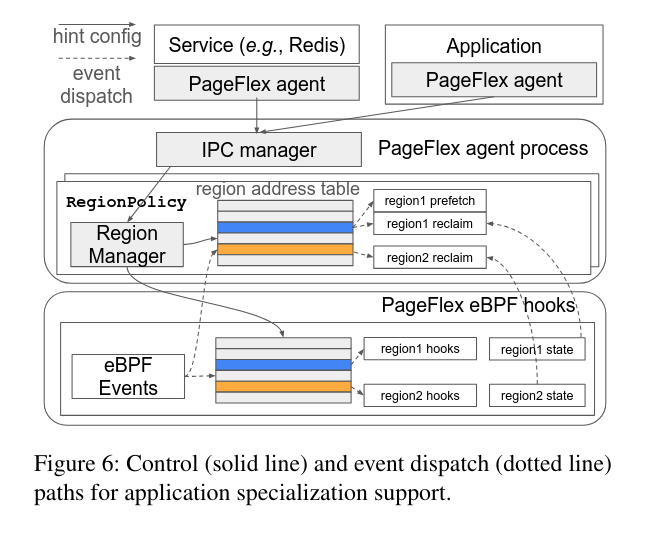

PageFlex supports applying distinct paging policies to different continuous memory regions and executing phases within a user application.

Specialization in PageFlex is guided by application-provided hints. PageFlex offers an inter-process communication (IPC) interface, enabling authorized agents to provide hints. Each hint message associates an existing PageFlex policy, along with specific parameters, to a designated memory region represented by memory addresses. Agents can be implemented within the application process or as independent, authorized processes running alongside the application.

There are region address tables both in agent process box and eBPF hooks box, because they both maintain tables for fast access, but one in user space, other in kernel space. The kernel space table entries are connecting to corresponding region hooks, those hooks are the policy event handlers for that region. Each region has its own state, and agent process reads them for its control loop.

An enclave is the thing that’s holding one RegionPolicy with other processes with PageFlex agents together in one cgroup.

Limits of policy expressibility

Due to the complexity restriction of eBPF verifier, code in event handlers cannot be too complex, therefore this code needs to be handled in user space which introduces a little overhead. However, the amount overhead may vary depending on the type of policy running (e.g. machine learning based).

The limited state such as 32-bit per-page weights available to summarize a page’s access history that are only accessible to eBPF code could also limit the policies which work with more “information context”.

Implementation

PageFlex is implemented with a combination of Linux kernel changes, a user-space agent written in C++, and a collection of eBPF programs. They made 608 lines of changes to kernel code with most of it defining and introducing tracepoints at various paging events, and supporting the reserved field in the page struct. Excluding the policy-specific component, the agent constitutes 2900 lines of C++ code and the eBPF programs are 700 lines of code to support the key components and the simplified policy interfaces.

Tracepoints.

They add tracepoints in the kernel code to support PageFlex’s events (sometimes at multiple places for each event) and attach eBPF programs to run the event handlers where needed. Page allocations, frees, swap-ins and swap-outs are traced from cgroup page charge and uncharge functions that already track all possible ways pages enter and leave memory. Page-fault events are traced from page-fault handling code. For page-access scans, they depend on a kernel thread similar to g-swap’s kstaled whose period can be externally controlled. Currently, the thread scans all physical pages in the system in each scan; in future, this can be made cgroup-specific by moving to the (already-upstreamed) MGLRU’s page-table-based scans [8]. All tracepoints export the memcg id of the page so PageFlex can target required cgroups and a writable pointer to the reserved field in the page struct (described below).

Per-page persistable state.

To provide low-overhead per-page state for eBPF code, they reserve a 4-byte field in the kernel page struct (associated with for each page in memory) exclusively for eBPF use. By default, eBPF disallows writing to kernel data structures exported through the tracepoints. To allow write access, they use writeable tracepoints that allow explicitly whitelisting specific (section of) kernel data structures (in our case, the reserved 4-byte field in the page struct) to be writable by eBPF programs. This is safe because they make sure this field is never read by the kernel so any data written by the eBPF programs has no effect on the kernel’s operation. To persist the state across swap-outs (the page structs are freed for swapped-out pages), they re-use swap cgroup maps that already maintain cgroup ids for swap entries for cgroup-level swap accounting. When a page is swapped in, they retrieve the old state and provide it to the policy through the OnPageAlloc event.

eBPF maps

PageFlex extensively uses eBPF maps for eBPF/user space communication. For each policy, they use a BPF_MAP_TYPE_HASH map for policy configuration, BPF_MAP_TYPE_ARRAY map for statistics, and BPF_MAP_TYPE_RINGBUF for event notifications to trigger user-level handlers if needed. Policies may employ maps where needed, too. For policies, they use histograms modeled on hash maps to communicate page weight distributions. Region-aware policy maintains a hash map to store the VMA segment to sub-policy association, which is traversed to find the right policy handler at each event. (Current implementation reserves 256 slots per enclave.)

Evaluation

“KOPS” = how fast pages get promoted